I was really pleased to be invited to speak at #TMHistoryIcons in Sheffield last weekend. I found the whole day hugely inspiring and it was great to be able to meet so many people that I’ve interacted with on Twitter and catch up with some old faces.

With a blank canvas for my talk, I decided that I would speak about a topic that has been close to my heart this year, which is diversity in the curriculum – very specifically, ethnic diversity. I’ve been on the proverbial journey with this over the past couple of years, since I joined my current school, and I was keen to share what I’d been doing and why I thought it was important. This blog post is much of what I said (or intended to say).

I found that I was more nervous giving this presentation than I have been speaking at events at the past and meditated a little on why that might have been. Firstly, this is quite personal to me: I feel strongly about it and I don’t think that’s something I could really say about 50 good AfL tips or similar. Secondly, I find this topic to be a minefield. There are a lot of opinions out there. I wasn’t sure if I was best placed to speak on this topic. I very definitely have not done enough reading. I’m white and relatively privileged. I’m nervous about saying the wrong thing. However, in the end I referred myself to point one and just got on with it.

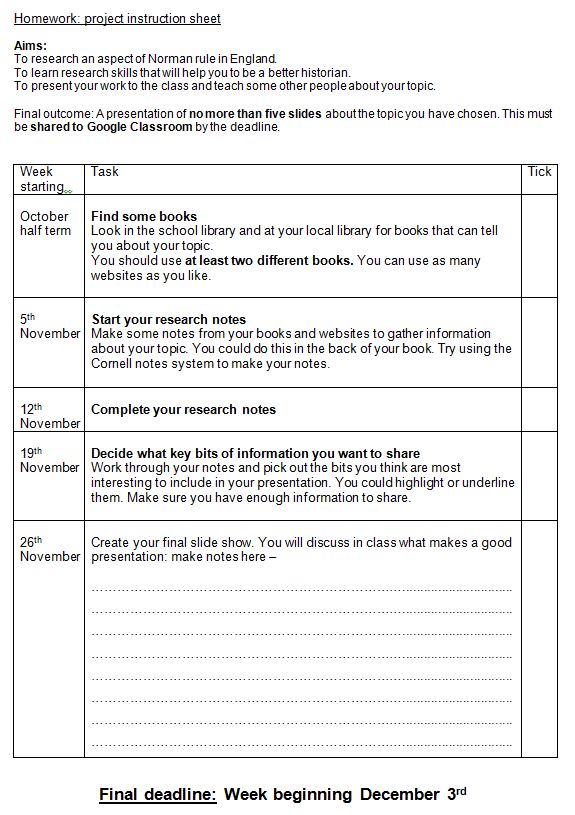

When I moved schools in 2016, for a host of reasons I didn’t find a KS3 curriculum that I could pick up and teach. In a panic, facing new courses in Y10-13 alongside another brand new member of staff, I bunged in my old KS3 curriculum that we had just finished reworking for the new GCSE. It held for a year but then the cracks started to show. It didn’t reflect the diversity of the student body, and that started to become apparent through student voice and through GCSE uptake.

At the same time, I wanted to be better at teaching Transatlantic slavery. This is very specific to the Bristol context of the school, as well as the school itself. This isn’t an area where I can afford to get it wrong for the students: they have to be empowered to debate this topic and it’s important that they have an opinion.

The personal game changer for me was this book, which I picked up on a whim and read over the Easter holiday last year. Eddo-Lodge eloquently sets out the case for improving our curriculum offering and reeled me in with an opening chapter that provided a brief history of black British History in the 20th century. It left me humbled, to the extent that I went back to work and immediately started teaching y9 a sequence of lessons on the Windrush generation, begged from Dan Lyndon-Cohen and adapted for our Bristol context. That proved timely, because a week later, news of the Windrush deportations hit the press.

I cut some lessons on the Cold War to provide the curriculum time for this. Meditating on the two topics, I wondered – is the history of the Windrush generation more relevant to our understanding of the world today than the Cold War? My conclusion was – yes, probably; though of course in a perfect world we’d teach both.

My main move towards providing a more diverse curriculum has been to ensure that all my students can see a reflection of their own lives in what I teach them. However, in the first year of pizza group, one member (name lost to the mists of time) said that he was aiming to create good ‘citizen historians’ by the end of compulsory history education: those that understand the world we live in and can interact with it discerningly. As I’ve meditated on having a diverse curriculum and considered how it applies in my previous context, I have begun to realise that it’s almost more important to provide this narrative to students who don’t come from ethnically diverse backgrounds. Arguably, my Asian, African, Caribbean students will have some discussion about their history at home. They are already aware that there is a bias in the history they’re experiencing at school.

For white students, particularly in all-white contexts, this may not be apparent and there’s no guarantee that they will access the full narrative at any point in their lives if we’re not providing it for them in school. If we teach British history without illuminating the whole picture, we are setting our students up to continue to view non-white people as outsiders. And this is just ahistorical, right? More and more evidence is coming to light about the ethnic diversity that has existed in Britain for time – most recently with the work done on the Skeletons of the Mary Rose.

So, by the time I got to term 6 last year, when I replanned KS3 with my colleague Nick, I had some quite strong feelings about what it should look like, and since there was a lot of chat about red lines at the time, I made some. Here they are –

Firstly, we need to teach Transatlantic Slavery better, particularly if we’re going to count this as part of our diverse curriculum. I don’t really agree that it ticks that box, but it’s a way in.

Secondly, I wanted to tackle tokenism by including the stories of minorities in our existing units, rather than dropping in a unit and declaring it solved. The British Isles has always been an extremely diverse place, so, to continue with my analogy, it’s really just a case of shining a light on the full picture, rather than relying on the traditional narratives.

Finally, the diversity of our school population should be represented in the History curriculum in every year group. This has required some creativity but I’m getting there.

To begin, then, with how we tackle Transatlantic Slavery. The first thing was to shift my own thinking. The British Slave Trade isn’t ‘black history’ – it’s largely a history of what white people did to black people. Thinking about it this way was really helpful to me in thinking about how we could elevate our studies. No ‘slave trader’ games. No mock auctions. No diary of a slave on the Middle Passage. Instead, the focus I try to keep running through my units is on, firstly, why so many people thought it was OK and, secondly, the stories of black people caught up in it.

Here is how we tackle to topic at Key Stage 3.

Y7 – How should we teach about the slave trade?

Y8 – Why was slavery abolished? (As part of a Sim/Diff study on 18th/19th century protest)

Y9 – How was the slave trade still affecting Britain in the 20th century?

I start in year 7 with the basics of the trade and some stories of individuals. We look at Fanny Coker, a local servant to a plantation-owning family called the Pinneys. Her mother was transported from an area that is modern Nigeria to Nevis when she was 12. Their story, and that of Fanny’s grandmother, was the subject of a project known as Daughters of Igbo Woman. I think telling the stories of individuals is important to make it clear that this story involves people – the same way it is important to look at the stories of individual victims of the Holocaust: the scale is so vast that it becomes meaningless. It can be difficult to track down stories of individual slaves in the Caribbean, so I was lucky that I was contacted by Ros Martin, one of the project’s arists, who wanted to come to do a workshop with students surrounding the project.

The year 7 scheme of work ends with the students creating a resource to teach about Transatlantic slavery to younger students, with a lofty aim of sharing this with primary school students within our trust, though I haven’t managed this yet.

The next visit to the topic comes partway through year 8. We teach a similarity and difference unit called Power to the People, which looks at abolition, the French Revolution and British political protests in the early 19th century, comparing the motives and methods of protestors. This is brand new in year 8 for this year and, sadly, I don’t teach any year 8, though my colleagues report back favourably. Previously it sat within a unit on change and continuity in Industrial Britain. I wrote all about it here.

The third part of this topic comes in year 9, when we look at the Windrush migration to Britain and consider the long-term impacts of Britain’s slave trade, in creating black citizens of the Empire that felt Britain was their mother country. To be honest, this is the section of the topic that I am least happy with at the moment. There’s a lot more that could be done with it. To that end, this week I replanned my opener lesson to the post-war world for Y9. Instead of focusing solely on the slide into Cold War, I added in overviews of decolonisation and the formation of the United Nations. As well as giving students a stronger foundation on which to build for Cold War studies – the Vietnam War is going to make a lot more sense – they now have a little insight into the UN Declaration on Human Rights and the partition of India, as well as the formation of Israel. I like this route and will follow it further. I’m a little inspired here by my reading of East West Street, which really made me think about just how different the world was after WW2. Anyway. Lots to think about there.

My other next steps with teaching Transatlantic Slavery is to include a study of African kingdoms prior to the development of TS, within the Y7 unit, and do a bit more work on the agency of slaves in fighting for their emancipation. The latter is largely inspired by my year 13s, some of whom write their coursework on this topic: the emphasis they place on figures like Toussaint L’Ouverture and the Baptist War has made me rethink how I teach it lower down the school. Don’t tell them though. I’ll never hear the end of it.

This might all change for next year, because I’m delighted to be taking part in the Historical Association’s Teacher Fellowship on Britain and Transatlantic Slavery, which begins next week. So, hopefully I will do even better with this in future.

On to the next.

I’m really worried about my curriculum looking tokenistic when it comes to diversity. I don’t want students to feel like I am trying to tick a box: this is about illuminating the full picture, remember, not briefly shining a flashlight into the corner and then going back to the same old mural that everybody is familiar with.

Here’s what I’ve done with our curriculum.

Y7 – Medieval Realms – Similarity/Difference comparison with Medieval Islamic Empires

Y8 – The History of an Idea – Islamic Centres of Learning

Y8 – The Development of Democracy – the Race Relations Act

Y9 – WW1 – Why did Empire soldiers choose to fight for Britain?

In year 7 I shortened Ye Olde Medieval Realms unit by three lessons and added in three lessons on Islamic Empires, and adjusted the assessment to be one of similarity and difference. My PGCE student, Sonia, has planned these lessons and the assessment for me this year.

In year 8, we introduced a new development study this year which I’m referring to as the History of an Idea. This is essentially a prequel to the Medicine Through Time study at GCSE – the idea in question is the Theory of the Four Humours and this unit allows us to get our teeth into Hippocrates and Galen, but it also enables us to look at how that classical knowledge was preserved, protected and critiqued in Islamic centres of learning. All the diverse bits from the old Medicine course – Constantine the African, Ibn Sinna, Ibn al-Nafis and the circulation of the blood – sit within this unit.

Late on in year 8 we teach a unit on the development of democracy, running from Magna Carta to present day, that will now include a look at the Race Relations act. This is as yet unplanned! I’m getting to it…There’s also something interesting that can be done here with the 1964 Smethwick election and Malcolm X’s visit to the UK, particularly if you’re already teaching American civil rights.

Finally, in year 9, we begin with a study of WW1 that focuses on the reasons why people joined up. This was probably the easiest win – I added a lesson on why men from other parts of the Empire chose to fight for Britain. This provides a good preview of the notion of ‘mother country’ that we can build on when looking at the Windrush generation.

On top of all of this, we have a project option for students in all year groups, to encourage research and wider reading. The Y8 independent project looks at 20th century protest movements so the Bristol Bus Boycott and the Black People’s Day of Action sit nicely within this as options for our students. In Y9, the project is formed around the memories of an older family member. What comes back is a real mixed bag and there’s usually quite a lot about moon landings and evacuees, but I’ve learned more about the Somali Civil War, Indira Gandhi, the Suez Crisis and the Polish home army, to name a few from this year. At my last school, I had a presentation from someone whose great-grandad was an Auschwitz survivor. It was after I’d taught the Holocaust unit that I found this out. A fairly humbling experience.

Where do we want to go next?

I’ve been working on using Miranda Kaufmann’s book Black Tudors with year 7 and this has been made considerably easier with the latest news from the Mary Rose, showing that the crew were more diverse than previously realised. The focus for our Tudors unit is ‘myth-busters’ and largely considers popular interpretations of the Tudor period – was bloody Mary really bloody, did Henry really break with Rome so he could get a divorce, etc. So, ‘Was Tudor England really all white?’ will sit in there quite nicely, particularly alongside interpretations such as the Cowdray Engraving, which turns out to be an 18th century reproduction of the original – is the reproduction faithful?

In year 8, we’ve realised this year that we need a more robust unit on the British Empire. My usual focus for this topic is on the impact of the Empire on Britain. It feels a little paternalistic to teach about the empire by only considering its impact overseas, as though the relationship were one way – again, this does not illuminate the full picture.

In year 9, as well as the aforementioned changes to our post-WW2 study, I’d like to pick up the theme of Empire again by looking at the contribution of empire soldiers in WW2. Last week I heard Hazel Carby, Professor of African-American studies and American studies at Yale, speak about her new book, Imperial Intimacies, which traces her father’s experiences growing up in Jamaica, joining the RAF and subsequently settling in Britain with a Welsh wife. (As an aside here, having looked up her Wikipedia page, I LOVE that she was studying at Portsmouth at the same time as my dad…perhaps they met). Professor Carby talked about the hardships faced in Jamaica when merchant shipping was interrupted by WW2, leading to a huge spike in the crime rate and abject poverty. Her father joined the RAF as a way out of this; when Carby was asked by her teacher at school what her father did and replied that he was an RAF airman who’d fought for Britain in WW2, her teacher replied that she must be lying because only white people fought in WW2. The ‘Britain stood alone’ narrative is tenacious – though it does not shine a light on the whole story.

At GCSE, it’s a little more difficult. The best route is to choose a board with a migration thematic study and diverse offerings in the depth and period blocks, but it’s not always possible and GCSE has to be driven by a whole range of things. We teach Edexcel and I sneak bits in where I can. As time passes and I get better at teaching the GCSE, there will be more time for thinking about bits of history that are invisible within the spec. For now, we’ve tried to contextualise our GCSE with some smart planning at KS3, so that students should naturally bring this in during class discussion. Some examples of this – Y7 look at the origins of the slave trade in Elizabethan England and diversity in the Tudor era. The year 8 unit helps to provide wider world context for medicine.

A-level is what I have changed least, but we do offer an NEA on Transatlantic slavery, alongside one on 17th century witchcraft. For current y12, we’re planning to offer them completely free choice, hopefully enabling students in future to build on their independent projects from Y9 and think about researching further into topics that they feel interested in. Where will it take them? We’ll see.

Here end my thoughts on the topic, to date. I am aware that there is so much still to be done. I am aware that there is so much that could come under the umbrella of diversity that I haven’t even sniffed at. I apologise if I’ve used the wrong terms or said anything fist-bitingly ignorant. I’m going to keep reading and hopefully it will get better. Hopefully I’m going to inspire a broader cross-section of my students to continue their studies of history beyond school so that they can come back as history teachers and do a better job of this than me.

I’d love to hear about what other people are doing with the curriculum in their schools to illuminate the whole picture. Please to get in touch. If you want to read more, Nick Dennis has put together a very comprehensive reading list and also wrote a good article for Teaching History in which he covers some more ideas for adding diversity at KS4. The books I’ve been reading are as follows:

Reni Eddo-Lodge – Why I’m no longer talking to white people about race

David Olusoga – Black and British: A Forgotten History

Adam Hochschild – Bury the Chains

Miranda Kaufmann – Black Tudors

Haki Adi – Black British History: New Perspectives